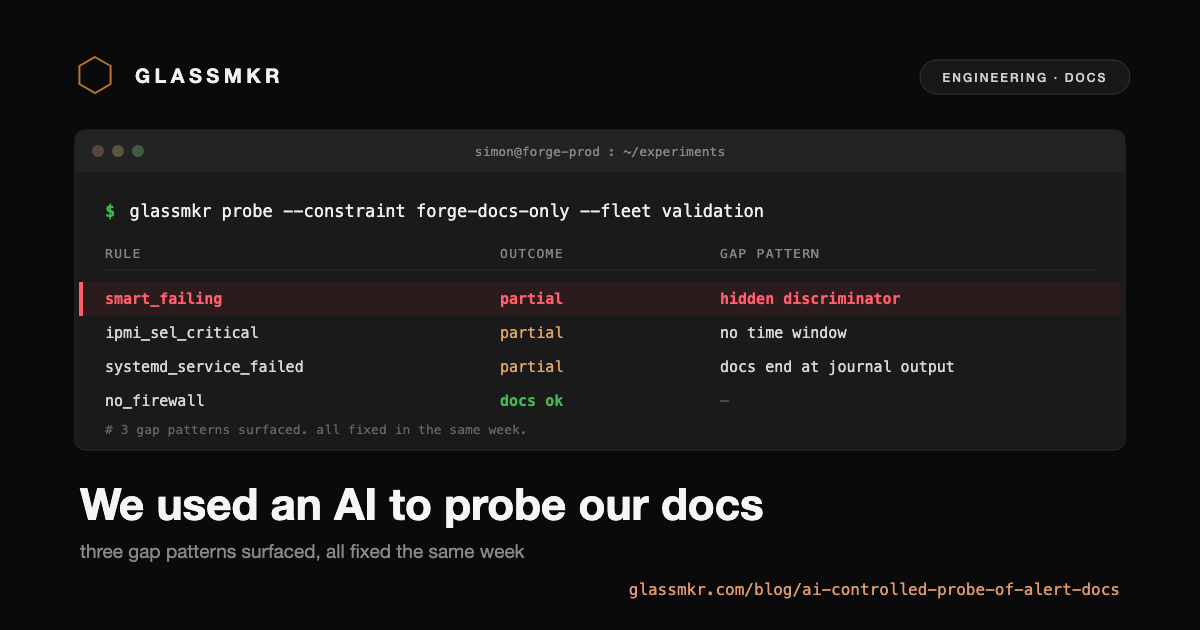

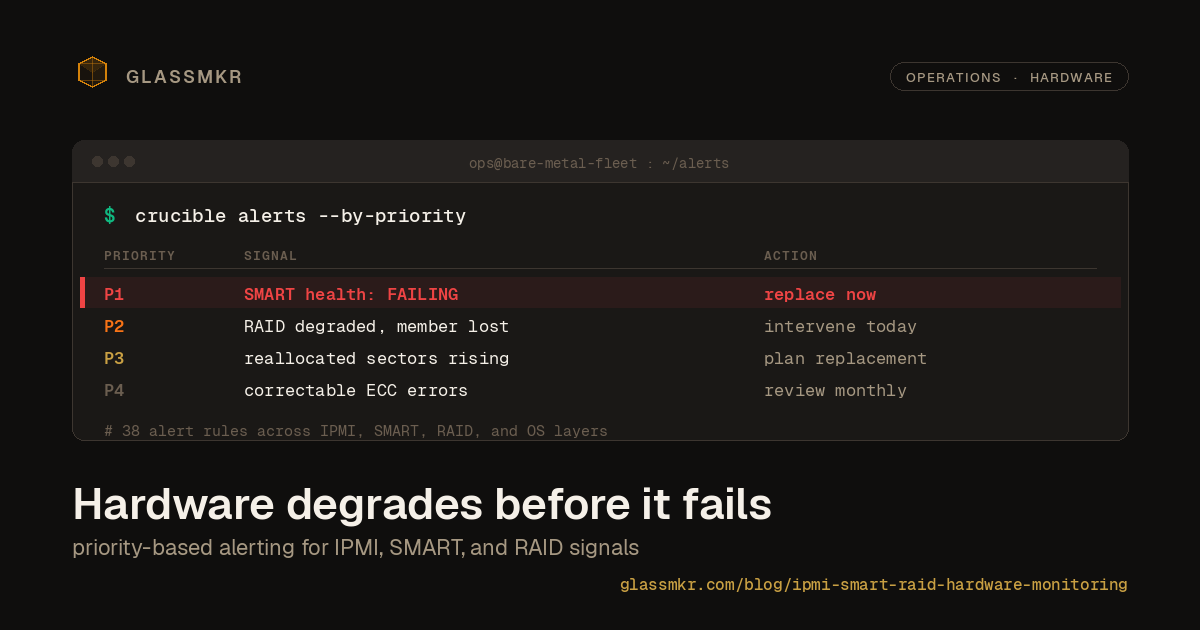

We used an AI as a controlled probe of our alert documentation

We forbade an AI from using its training data and made it resolve real infrastructure alerts using only the guidance our own dashboard produces. Three gap patterns surfaced. All three fixed in the same week.